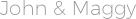

DL describes a family of learning algorithms rather than a single method that can be used to learn complex prediction models, e.g., multi-layer neural networks with many hidden units ( LeCun et al., 2015). Deep learning (DL) is such a novel methodology currently receiving much attention ( Hinton et al., 2006). For this reason, there is an urgent need for novel machine learning and artificial intelligence methods that can help in utilizing these data. This confronts us with unprecedented challenges regarding their analysis and interpretation. We are living in the big data era where all areas of science and industry generate massive amounts of data. Hence, a basic understanding of these network architectures is important to be prepared for future developments in AI. Importantly, those core architectural building blocks can be composed flexibly-in an almost Lego-like manner-to build new application-specific network architectures.

These models form the major core architectures of deep learning models currently used and should belong in any data scientist's toolbox. For this reason, we present in this paper an introductory review of deep learning approaches including Deep Feedforward Neural Networks (D-FFNN), Convolutional Neural Networks (CNNs), Deep Belief Networks (DBNs), Autoencoders (AEs), and Long Short-Term Memory (LSTM) networks. On a downside, the mathematical and computational methodology underlying deep learning models is very challenging, especially for interdisciplinary scientists. Recent breakthrough results in image analysis and speech recognition have generated a massive interest in this field because also applications in many other domains providing big data seem possible.

Frank Emmert-Streib 1,2 *, Zhen Yang 1, Han Feng 1,3, Shailesh Tripathi 1,3 and Matthias Dehmer 3,4,5